Adaptive Audio Augmentation (AAR)

An independent design research project conducted as part of a Master’s thesis at Kristianstad University (Spring 2024). The focus was on exploring how AI-based sound enhancement can help people remain aware and feel safer in noisy environments by filtering, amplifying, or highlighting sounds based on context and user needs.

Academic supervisor

Kristianstad University

Service

Conceptual Design

Team

1 Digital Designer, Interview Participants – Diverse users from different noise-exposed environments

Review Support – UX peers and academic feedback

Review Support – UX peers and academic feedback

Timeline

6 months

Goal

To investigate and design a concept where artificial intelligence adapts real-time audio experiences to improve awareness and well-being in loud, distracting, or potentially hazardous environments such as city streets, public transport, or construction sites.

Challenge

Modern environments often expose people to overwhelming or unsafe soundscapes. The key challenges in this project were:

Supporting situational awareness without overwhelming users

Designing AI-driven sound personalization that feels transparent and non-invasive

Addressing privacy and ethical concerns around real-time audio processing

Creating a system that adapts to diverse contexts and user preferences

Balancing simplicity in the interface with powerful audio capabilities

Supporting situational awareness without overwhelming users

Designing AI-driven sound personalization that feels transparent and non-invasive

Addressing privacy and ethical concerns around real-time audio processing

Creating a system that adapts to diverse contexts and user preferences

Balancing simplicity in the interface with powerful audio capabilities

Outcome

A well-documented design concept for adaptive audio augmentation

A prototype with clear user flows and scenarios

A risk framework for AI-driven sound solutions

A foundation for future exploration in smart hearing, safety tech, or immersive audio interfaces

A prototype with clear user flows and scenarios

A risk framework for AI-driven sound solutions

A foundation for future exploration in smart hearing, safety tech, or immersive audio interfaces

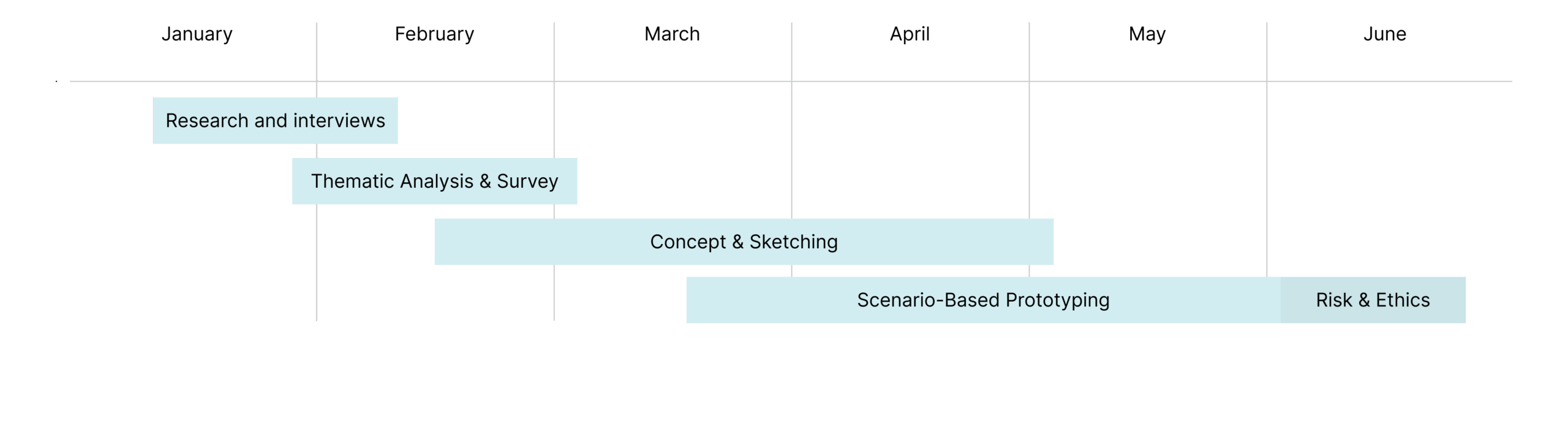

Project timeline

How it started

The project began with research into sound environments, health impacts of noise, and current smart audio technologies. Through interviews and literature review, I identified a gap: current tech focuses on passive noise reduction, not adaptive sound enhancement for awareness and safety.

The early stages focused on:

The early stages focused on:

- Defining key use cases

- Mapping user frustrations

- Framing the problem from both UX and ethical perspectives

Design process

- 1. Research & Interviews

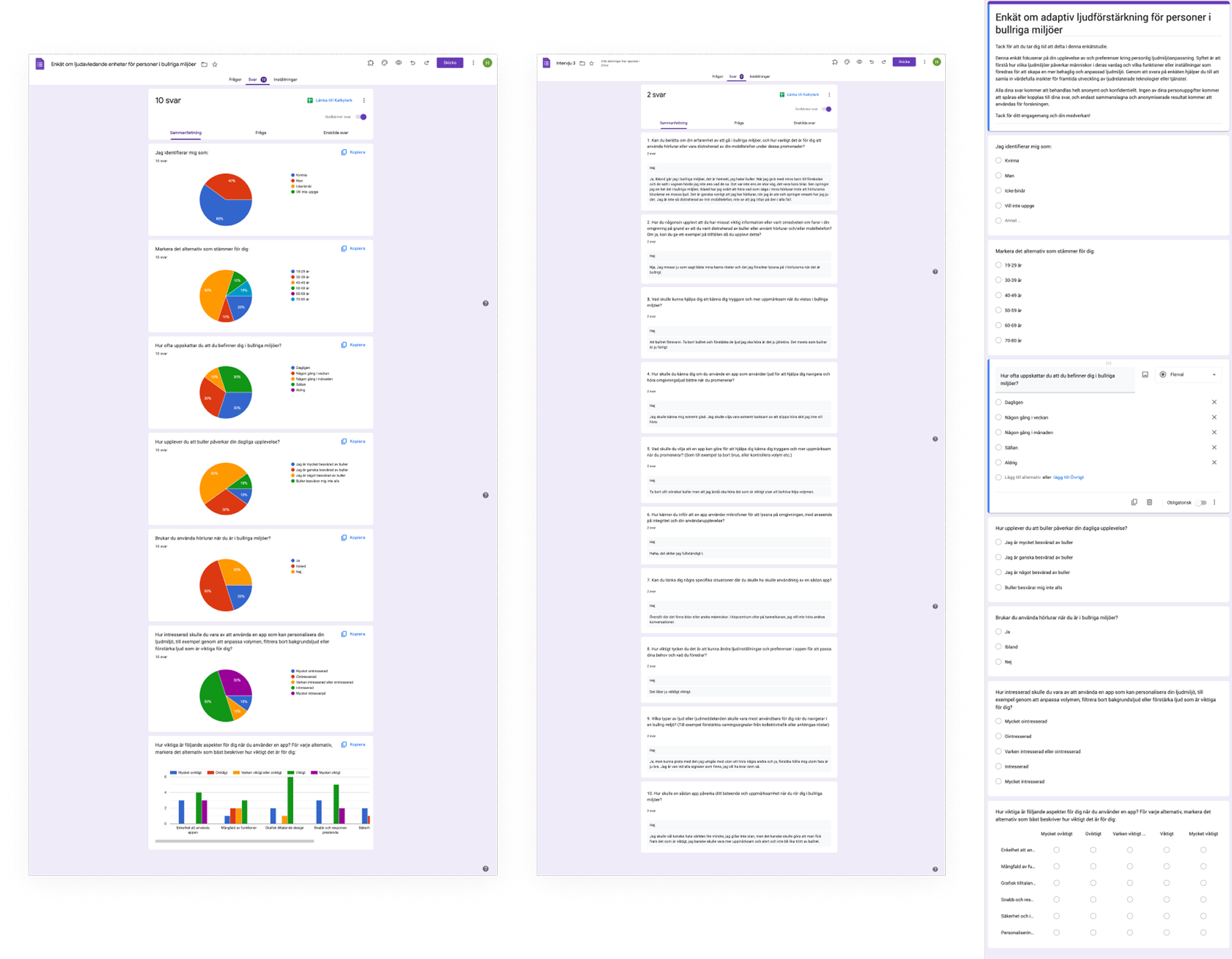

I conducted qualitative interviews and reviewed academic and UX literature on auditory awareness, safety, and human perception in noisy spaces. - 2. Thematic Analysis & Survey

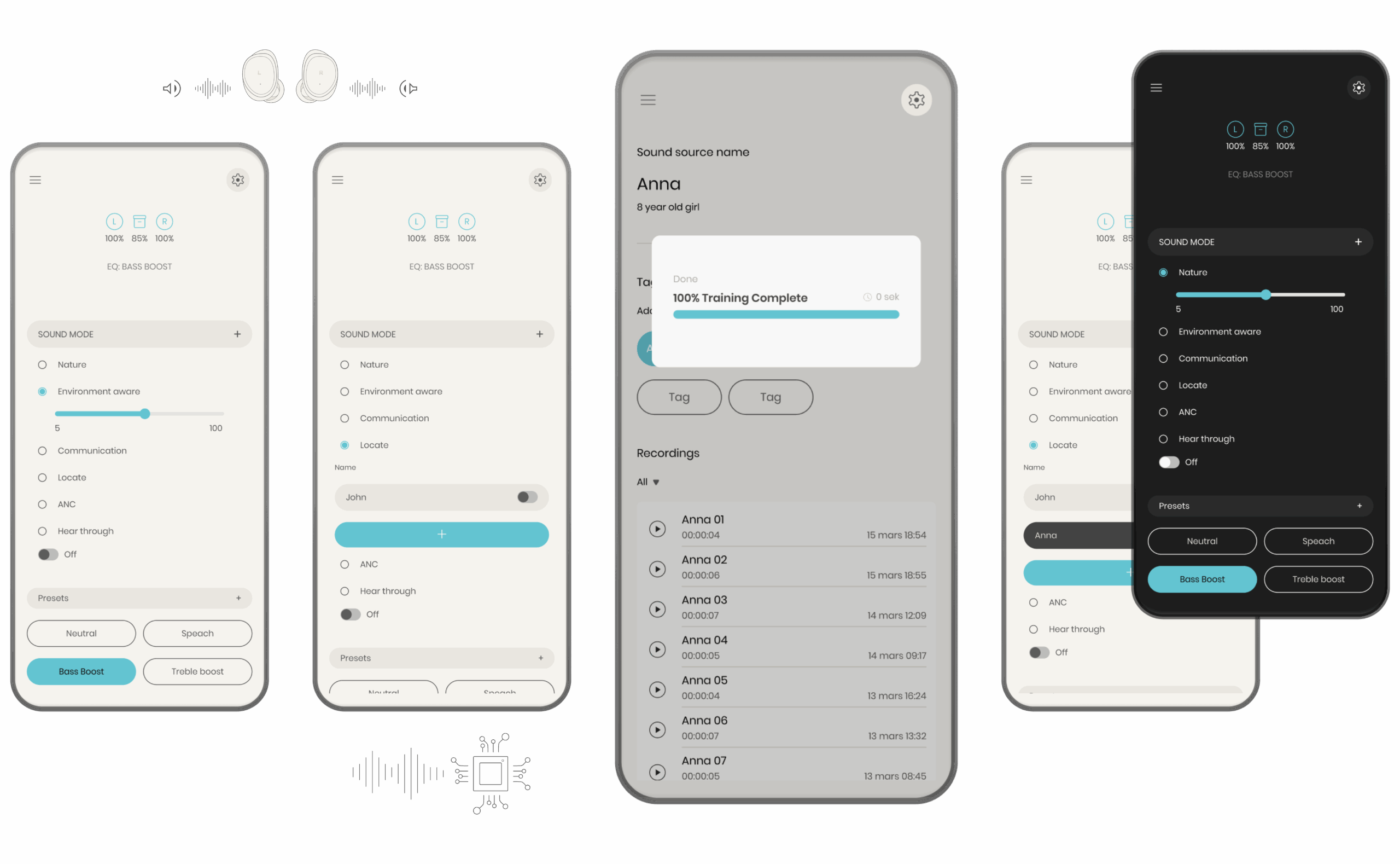

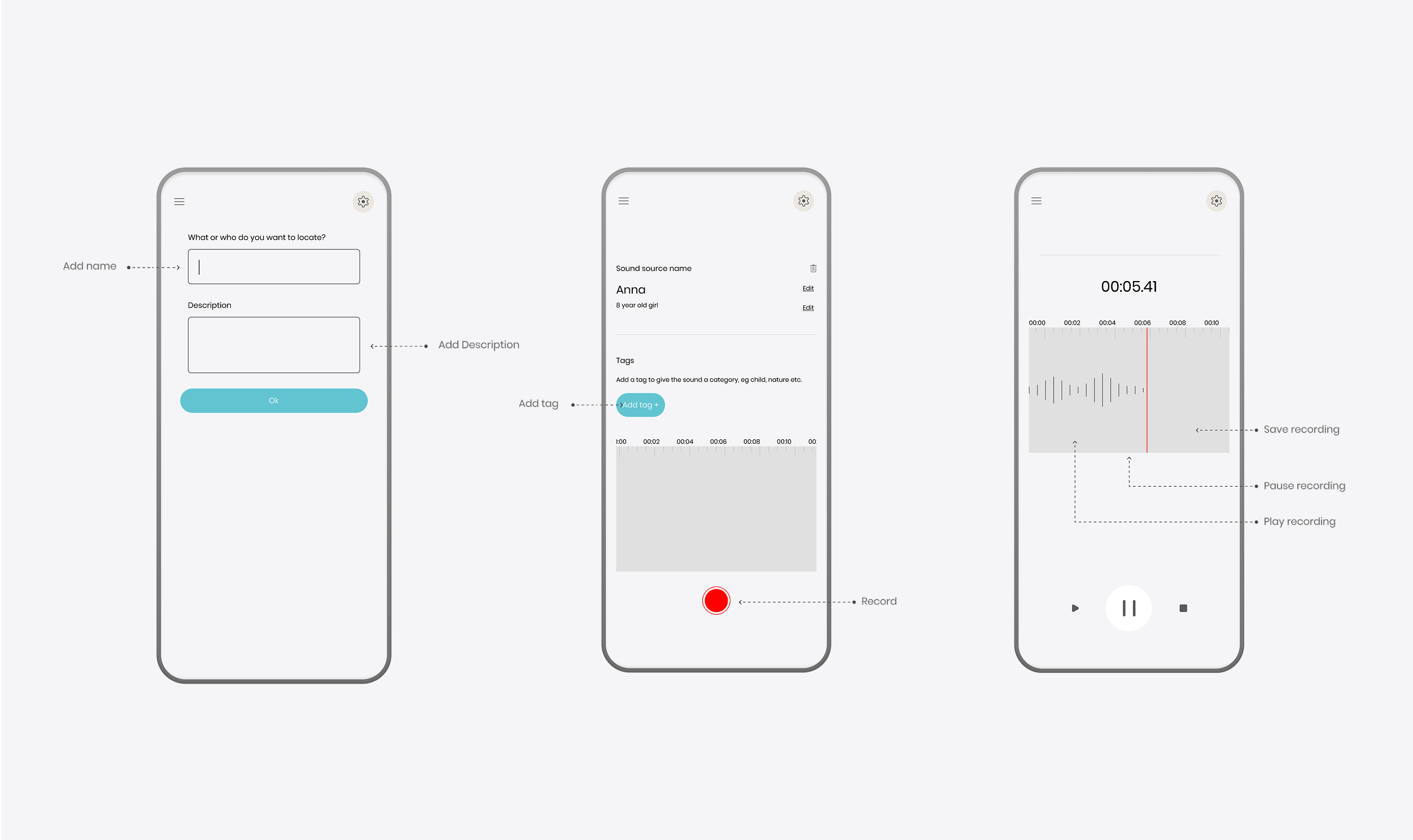

Using thematic analysis, I identified five key user needs: safety, clarity, simplicity, transparency, and control. These were validated via a short online survey (n=10). - 3. Concept & Sketching

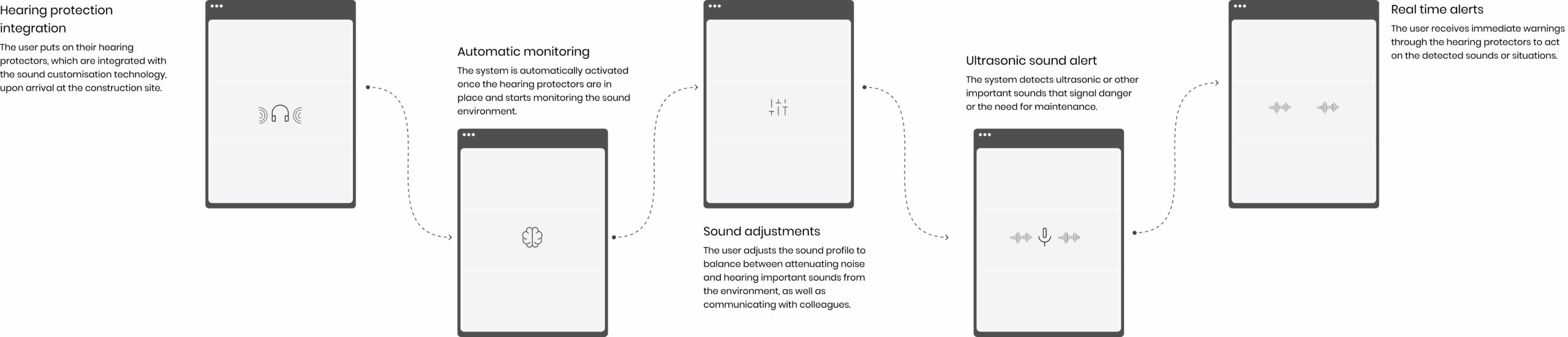

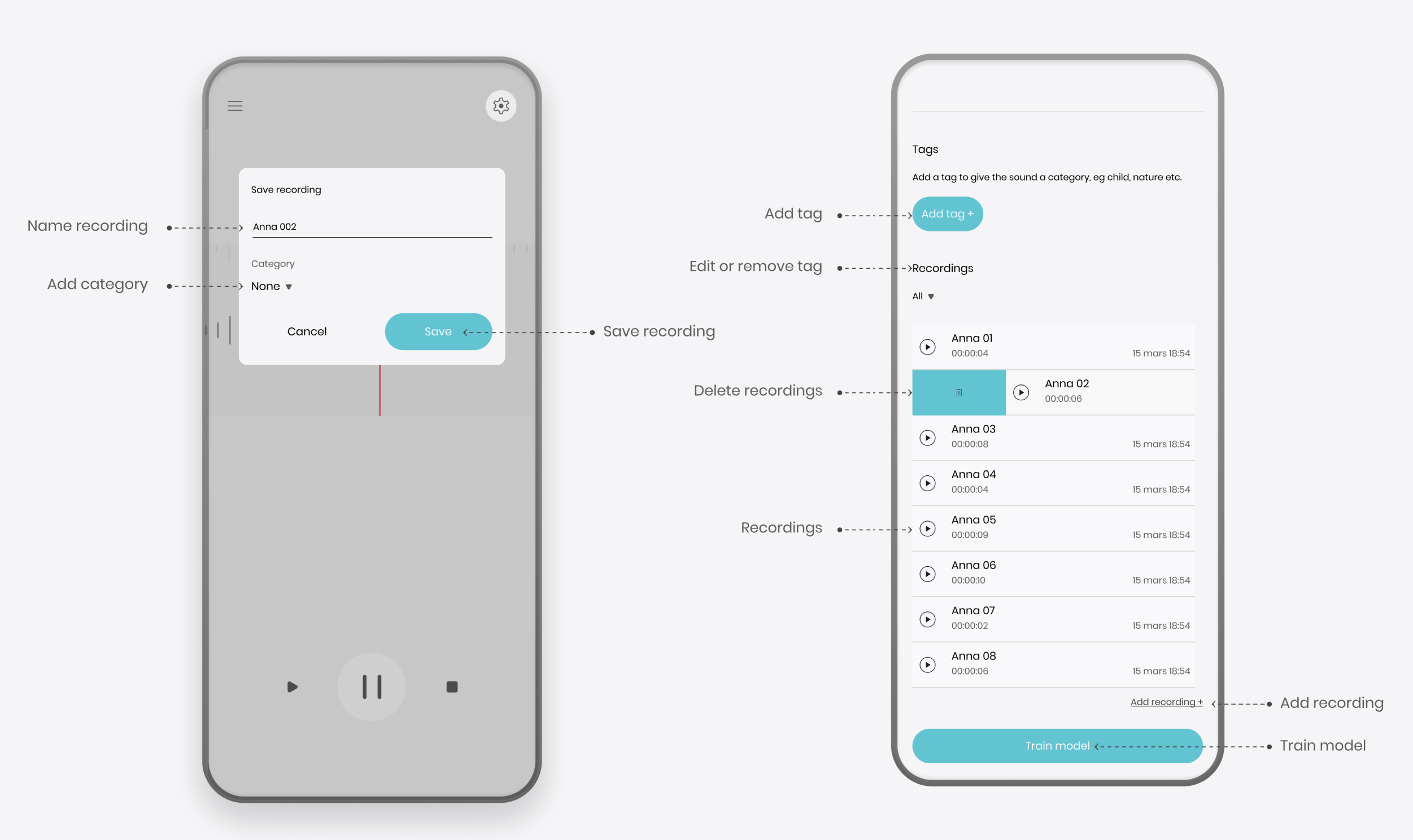

I developed interface concepts for mobile and wearable audio controls. Ideas included sound filtering, adaptive modes (e.g., “city”, “machine”, “crowd”), and real-time audio cues. - 4. Scenario-Based Prototyping

I created interactive scenarios for different environments — e.g., crossing a city street, working on a construction site, and navigating a theme park — showing how the system responds. - 5. Risk & Ethics

A light ethical analysis considered issues like AI transparency, false positives, user dependency, and privacy. These considerations informed both design decisions and long-term implications.

Design System Elements

Even as a conceptual project, I applied a lightweight system-thinking approach to interface design:

- Sound categories with visual labels (e.g., alerts, voice, ambient)

- Icon system for contextual feedback

- Color-coded modes for clarity

- Minimal UI layers to avoid overloading users

Survey Outcome

The survey (n=10) confirmed the five thematic needs identified in earlier interviews:

Safety, simplicity, clarity, user control, and trust in automation were consistently rated as most important.

70% of participants reported feeling overwhelmed or distracted by urban noise at least once a week, and 80% said they would consider using an adaptive audio system if it helped them stay more aware in busy environments.

Feedback also emphasized the need for easy overrides, visual feedback, and minimal screen interaction.

Safety, simplicity, clarity, user control, and trust in automation were consistently rated as most important.

70% of participants reported feeling overwhelmed or distracted by urban noise at least once a week, and 80% said they would consider using an adaptive audio system if it helped them stay more aware in busy environments.

Feedback also emphasized the need for easy overrides, visual feedback, and minimal screen interaction.

Unique Features

- Selective super hearing: Enhances specific sounds while reducing background noise in real time.

- Smart prioritization: Identifies and emphasizes important sound sources based on user context.

- Audio augmentation: Adds realism through improved sound quality and ambient effects.

- Ultrasonic conversion: Makes otherwise inaudible signals (like gas leaks) audible.

- User training: Users can teach the system to recognize and locate specific sounds in complex environments.

Reflections & Learnings

- Even speculative design benefits from user research and validation

- Designing for sound requires new mental models — we “hear” context differently than we see it

- Trust and transparency are essential when users can’t directly “see” what AI is doing

- This project helped me apply user-centered methods to complex, intangible interaction models (like hearing, presence, and ambient systems)